The world of Information Technology and software development is full of new and upcoming platforms to handle every upcoming challenge in handling a job.

Data Science and Big Data are such two names in of the cluster. Designed and developed to handle huge volume of data units, both these have a correlated functionality but on a different edge.

Here the main differences between Big Data & Data Science

Big Data is basically used to store huge data volumes that are difficult to be processed accurately using the traditional applications on a single computer. Big Data uses the processing process by beginning with the raw data, not aggregated.

However, Data Science better known as data-driven science relates to an interdisciplinary field of using the scientific methods, systems and processes for pulling and managing the right knowledge or insights from this data in varied forms of either a structured or an unstructured form, just like data mining.

A Small Introduction on Big Data, Data Science, Hadoop and R Language

BIG DATA

Big data involves the right description and storage of large volumes of data in structured and unstructured form on a single computer. This data helps in running the day to day business operation. It not just holds the huge amount of data but also keeps the organizations’ data intact for useful business matters. It is concerned with an evolving term of describing any amount of voluminous structured, semi-structured and un-structured data that can be dug in for useful information processing.

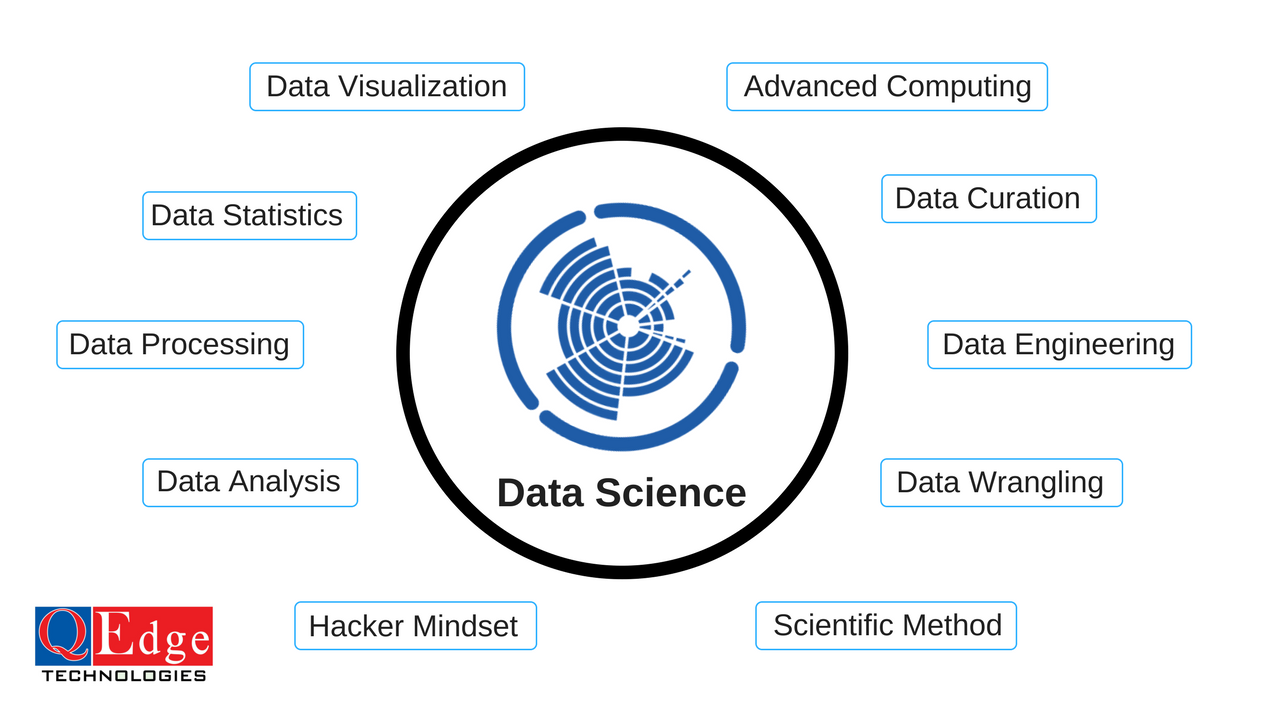

Data Science

Data science is considered as an interdisciplinary field involving specific scientific methods, systems and processes for extracting the right knowledge or insights from the data of various forms- structured or unstructured. This is similar to data mining but not completely data mining.

This is a “concept to unify statistics, data analysis and their related methods” for “understanding and analyzing the actual phenomena” with your data. It takes into account the tools, techniques and theories of multiple fields of mathematics, information science, statistics and computer science, particularly from the sub-domains of data mining, cluster analysis, machine learning, visualization, classification and databases.

HADOOP

Hadoop is another open source and Java-based programming framework that supports the processing and storage of enormous sets of large data sets in a completely distributed computing environment. This is a part of the Apache project being sponsored by the Apache Software Foundation. This makes running its applications on systems along with the thousands of commodity hardware nodes, and handling thousands of terabytes of data, possible. With this distributed file system based facilitates enables with rapid data transfer rates; it allows the system to even continue operation in case of a node failure. There are various functional modules of Hadoop, including Hadoop Common as the kernel, Hadoop Distributed File System (HDFS) for data storage, Hadoop Yet another Resource Negotiator (YARN) for resource management and its scheduling for the user applications; and the Hadoop MapReduce.

R-Language

R is a known as the programming language and the software environment for handing statistical analysis, its reporting and its graphics representation. Having a basic understanding of the terms related to computer programming is enough to train you as a professional. Created by Ross Ihaka and Robert Gentleman at the University of Auckland, New Zealand, it is presently developed in association with the R Development Core Team.

It was named R due to the first letter from the first name of the two R authors (Robert Gentleman and Ross Ihaka).

Roles and Responsibilities of Big Data & Data Science Engineer

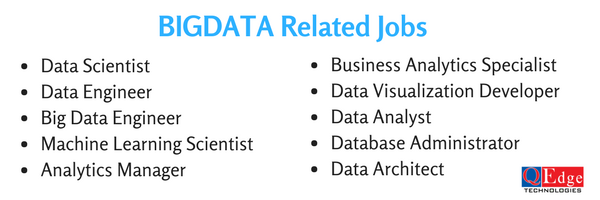

Any professional getting trained in the field of Big Data or Data Science has a great scope of becoming a professional from the below mentioned domains-

- Data Developer

- Data Analyst/ The Business Analyst

- The Database Administrator

- Data and Analytics Manager

- Data Architect

- Data Tester

- Data Scientist

- The Statistician

Basically called as the Data Engineers, they have a predefined set of roles and responsibilities in their relative field. They are basically considered to develop, construct, test, and maintain the highly scalable functioning operations of the data management systems.

Mentioned below is a specified role description of each-

Data Developer

Data developers or engineers are responsible for the collection and data storage data for real-time or batch processing, and provide these data to the data scientists for analysis via an API.

Data Analyst

The use of languages such as-R, Python and SQL is required for a data analyst’s profile. Similar to the data scientist role, they also require a broad skill-set of combined technical and analytical knowledge. Such profiles are looked for by the giants such as HP and IBM.

The Business Analyst

Being the least technical profile, this role of this person is creating a correlation between the various business processes and manages them. He is a middle person between the business people and the technical brats. Such profiles are searched by companies like Uber, Dell and Oracle.

The Database Administrator

As a database administrator, one needs to make sure that the database is accessible to each and every stakeholder of the organizations and the related functions and operations of the same are performed rightly and in a safe manner. Thus requires languages like- SQL, XML and even Java.

Data and Analytics Manager

The data and analytics manager steers the direction of the data science team. This individual consolidates strong and specialized skills in a various arrangement of advancements (SQL, R, SAS) with the social aptitudes required to deal with a group. It’s a hard employment but if you feel up for the challenge, make sure to have a look at offerings from companies such as Coursera, Slack. Luckily, with a yearly average salary of $116k, the financial compensation is in line with the high requirements.

Data Architect

Industries like banking and FMCG are mostly looking for these professionals for integrating, centralizing, protecting and maintaining their data sources. The data architects have to work with the latest technologies like Spark and keep an eye of the architectural setup in relation to the data.

Data Tester

The job of a tester is similar to a testing profile, where the professional has to validate the data collection and management process for higher level of data evaluation.

Data Scientist

Data Scientist is one of the highly paid job titles in this field and could even take you to a yearly average salary of $118, 70. The role of such professionals is to handle the raw data with use of the latest technologies and techniques for performing required data analysis, process and analyze the data for an informative gateway with the database.

The Statistician

The job title is being replaced by many different fancy job titles. This is among the pioneer jobs in the industry and involves the knowledge of statistical theories and methodologies. The role involves reaping the useful information from the data and transforming it into certain actionable insights.